The Experiment Log: Why the Moat Isn't the Infrastructure

Anyone can copy a landing page. Nobody can copy 90 days of optimization data. The real asset in a toll position isn't what you built — it's what you learned while running it.

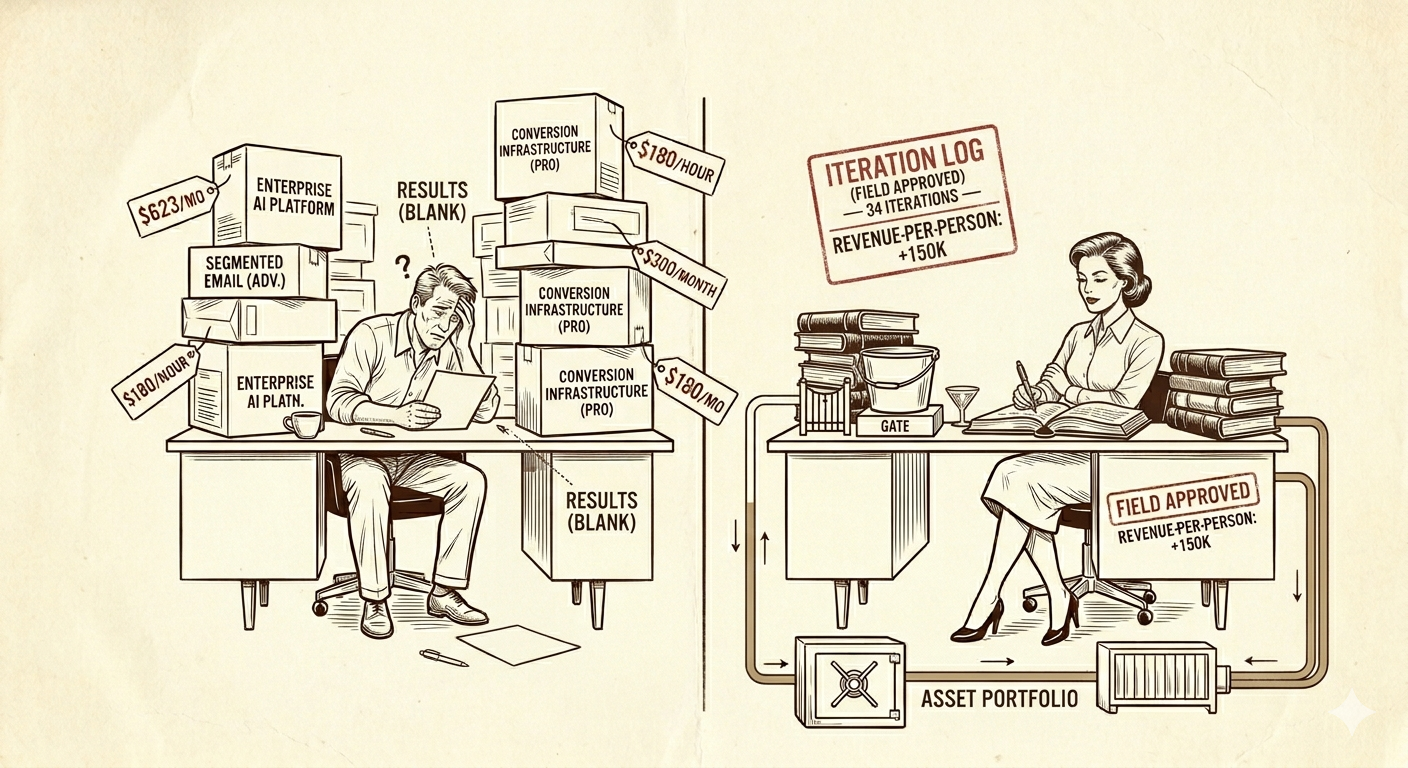

Two operators build the same toll position for the same niche. Same landing page template. Same email sequence structure. Same redirect layer. Same five-email welcome series promoting the same product categories.

After 90 days, Operator A converts at 2.1%. Operator B converts at 4.8%.

The difference isn’t talent or tools. It’s that Operator B ran 34 experiments in 90 days and Operator A ran zero. Operator B tested headline variants, email subject lines, send times, form placement, button colors, pre-sell copy length, product ordering, and price anchoring — logging every test, every result, and every insight. By day 90, Operator B’s system had been through 34 evolutionary cycles. Operator A’s system was still version 1.0, exactly as deployed on the first weekend.

The infrastructure is the commodity. The experiment log is the asset.

What an experiment log actually is

If you’ve ever maintained a lab notebook, a changelog, or a git history with useful commit messages, you already understand the experiment log. It’s a structured record of every change you make to the toll position, what you expected to happen, what actually happened, and what you learned.

A single entry looks like this:

Test #17 — Subject line A/B on Email 3 Hypothesis: “The one thing Dale didn’t mention” outperforms “3 things you need before buying silver” because curiosity gaps drive higher open rates in this niche. Variant A (control): “3 things you need before buying silver” — 24.3% open rate Variant B (test): “The one thing Dale didn’t mention” — 31.7% open rate Result: +7.4pp open rate, +2.1pp click-through rate, +$340/month in attributed revenue Learning: Curiosity gaps outperform list-format subjects in this segment. Apply to emails 4 and 5.

That’s it. A hypothesis, two variants, a result, a learning. Fifteen lines in a document. But after 34 of these entries, you have a dataset no competitor can replicate without running their own 90 days of experiments — and during those 90 days, you’ve moved on to experiment 35 through 68.

Why the experiment log is the real moat

In Building Inside Someone Else’s Business, the question was raised: why doesn’t the partner just copy your system once they see it working? The first answer was about creator psychology — creators want to create, not operate. That’s true. But the deeper answer is about what’s actually defensible.

The landing page is visible. The experiment log is not. A creator or a competing operator can visit your landing page and see the current version — the headline, the copy, the layout, the form placement. What they can’t see is the 33 previous versions that didn’t work as well, and the specific data about why this version outperforms them. They can copy the output. They can’t copy the intelligence.

Copying the current version gives you version 34. But version 34 was optimized for this specific audience at this specific moment. By the time a competitor reverse-engineers and deploys their copy, you’re on version 41. The gap doesn’t close — it widens, because each experiment builds on what previous experiments learned.

The compounding is in the learning, not the infrastructure. Experiment #1 taught you that curiosity-gap headlines work in this niche. Experiment #7 taught you that Tuesday morning sends outperform Thursday evening. Experiment #14 taught you that pre-sell pages under 400 words convert better than pages over 800 words for this audience. Experiment #22 taught you that the third email in the sequence is where most revenue is generated, not the first.

Each learning narrows the optimization space. By experiment 30, you’re not guessing — you’re making informed, data-backed adjustments with predictable outcomes. A new entrant starting from scratch faces the full optimization space. You face a much smaller one, because you’ve already eliminated the dead ends.

The experiment cadence that works

A toll position has a limited number of moving parts. That’s a feature, not a limitation — it means the experiment space is manageable for a solo operator.

Variables you can test:

On the landing page: headline (the highest-impact single variable), subheadline, pre-sell copy length, form placement (above fold vs. below), button text, button color, image vs. no image, social proof placement, product ordering if multiple products are featured.

In the email sequence: subject line, preview text, send time, send day, copy length, call-to-action placement, number of links per email, personalization level, sequence length (5 emails vs. 7 vs. 10), delay between emails.

In the redirect layer: which merchant offer to send traffic to, split ratios between merchant options, time-delayed offers (show offer A for the first 48 hours, then switch to offer B).

The weekly cadence: one test per week, running for a minimum of 7 days to account for day-of-week variation, with a sample size of at least 100 visitors (for landing page tests) or 200 subscribers (for email tests) to reach statistical significance.

At one test per week, you run 52 experiments in a year. At the end of that year, your toll position has been through 52 optimization cycles. A new competitor entering the space in month 13 faces 12 months of compound learning they’d need to replicate — and they can’t, because the experiment log isn’t public.

The discipline required: 30 minutes per week. Set up the test on Monday. Check results on Monday. Log the outcome. Start the next test. This is not a time-intensive activity — but it’s the one most operators skip because the individual impact of any single test feels small. That’s the same logic that makes most operators skip the gym: any single workout changes nothing, but 52 workouts change everything.

What makes engineers uniquely good at this

The experiment log is, functionally, a scientific method applied to commerce. Hypothesis, test, result, iterate. If your background includes any of the following, you already have the instinct:

A/B testing in product development. If you’ve run feature flags or split tests on a product, you’ve done this exact thing — just for different metrics. Swap “user retention” for “email click-through rate” and the framework is identical.

Performance optimization. If you’ve profiled code, identified bottlenecks, and measured the impact of changes, the mental model transfers directly. A landing page that converts at 2.1% is a slow endpoint. The experiment log is how you find and fix the bottleneck.

Data analysis. If you can read a p-value, calculate statistical significance, or interpret a confidence interval, you’re ahead of 95% of the marketing operators running these same tests. Most marketers test by gut. Engineers test by measurement. In this domain, measurement wins.

Version control. If you instinctively maintain a changelog, you instinctively maintain an experiment log. They’re the same discipline: record what changed, why, and what happened.

The reason most toll positions underperform isn’t that the operator chose the wrong tools or the wrong niche. It’s that they deployed version 1.0 and never iterated. For an engineer — someone trained in iterative development, measurement, and systematic optimization — this is the most natural competitive advantage imaginable. You’re not learning a new skill. You’re applying an existing one to a new domain.

The experiment log as leverage for your next partner

The experiment log doesn’t just improve your current toll position. It accelerates every future one.

When you approach partner number two — a creator in an adjacent niche — you bring 90 days of tested knowledge from partner one. You know that curiosity-gap headlines outperform list headlines by 7 points. You know that 350-word pre-sell pages outperform 800-word pages. You know that Tuesday morning sends drive the highest click rates.

Not all of that will transfer — different niches have different audience behaviors. But 60-70% of it does, because the underlying psychology of email capture, pre-sell, and purchase decisions is more consistent across niches than most people expect.

The practical impact: your second toll position reaches peak performance in 45 days instead of 90, because you start at a higher baseline. Your third reaches peak in 30 days. By partner five, you’re deploying near-optimized systems on day one and using your experiment cadence for the marginal 10-15% of niche-specific optimization.

That’s a compounding curve no new entrant can match. Your portfolio doesn’t just earn more over time — it optimizes faster over time.

What does AI change about experimentation?

AI compresses the experiment cycle and expands the experiment scope.

Hypothesis generation. Instead of deciding what to test next based on intuition, an AI system can analyze your historical data and suggest the highest-expected-impact test. “Based on the last 12 experiments, subject line changes in emails 3-5 have produced 3× the revenue impact of landing page changes. The next highest-leverage test is likely a subject line variant on email 4, which hasn’t been tested since experiment #9.”

Automated variant creation. AI can generate 10 headline variants in seconds, each varied along a specific dimension (curiosity, specificity, urgency, social proof). You pick the two most promising. This eliminates the creative bottleneck that causes most operators to test less frequently than they should.

Faster significance detection. AI monitoring can identify when a test has reached statistical significance mid-week — ending tests early when the winner is clear and starting the next test sooner. Over a year, this might compress 52 weekly tests into 65-70 tests, because winning variants are identified in 4 days instead of 7.

Cross-partner pattern recognition. When you’re running experiments across five partners simultaneously, an AI system can identify patterns that hold across niches (“curiosity gaps outperform list formats everywhere”) versus patterns that are niche-specific (“urgency messaging works in preparedness but backfires in fitness”). This accelerates the 60-70% knowledge transfer between partners.

Thirty minutes a week. One test. One log entry. It feels like nothing on any given Monday. But 52 Mondays from now, you’re sitting on a dataset no competitor can copy without burning their own year to build it. The infrastructure is the commodity. What you learned running it — that’s the thing nobody can reverse-engineer from a screenshot.

Want to build your intelligence moat?

This article covered the data dimension. The newsletter delivers measurement frameworks and signal patterns every week.

Newsletter coming soon.

Unsubscribe in one click. No cross-promo. Ever.